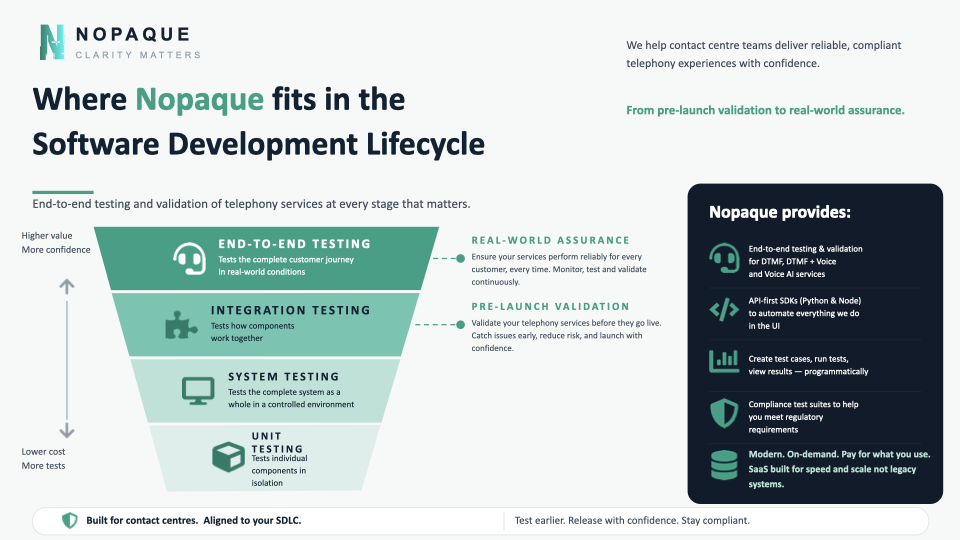

Why we flip the testing pyramid for contact centres, and where Nopaque fits in your SDLC

A pyramid that's pointing the wrong way

If you've spent any time in a software engineering team, you've seen the testing pyramid. Lots of cheap unit tests at the base, fewer integration tests in the middle, a thin sliver of end-to-end tests at the top. It's a sensible shape for most software. The end-to-end tests are slow, brittle and expensive, so you do as few of them as you can get away with.

For contact centres, that pyramid points the wrong way.

A telephony experience is mostly interface. It's a customer's voice, a phone network they didn't choose, an agentic LLM trying to reason in real time, an IVR menu that has to recognise speech and DTMF interchangeably, a CRM lookup that needs to come back in under a second, and a callback into a queue that has to actually connect to a human. Almost everything that can go wrong, goes wrong at a boundary between two of those things.

A unit test on your prompt template won't tell you that the bot mishears "four" as "for" when the caller is in a noisy car. A green CI build won't tell you that your new TTS voice now reads "ID-double-three" as "I D thirty-three". You can have a perfectly tested system that still fails its only job: helping a customer get something done on the first call.

So we drew the pyramid the other way up. Real-world assurance at the wide end. Unit tests still at the bottom, doing useful work, but not pretending to be the thing that proves the experience is correct.

What Nopaque does at each layer

The infographic above is essentially a map of where we earn our keep. Top to bottom.

End-to-end testing, real-world assurance. This is our home. We make actual phone calls into your service, over the PSTN, from a synthetic customer that behaves like a real one. We exercise the whole journey. Greeting, intent capture, authentication, the bit where a CRM lookup is supposed to happen, the handoff to a human if needed. We do it on demand and we do it on a schedule. When something regresses, you find out before your customers do.

Integration testing, pre-launch validation. Before a release goes live, we validate the seams. Does DTMF still work after you swapped your speech recogniser? Does the new agentic flow still hand off cleanly to the legacy queue when a caller asks for "an actual person"? Does your retry logic still retry? This is the layer most teams skip, because their existing test tooling can't dial a phone. Ours can.

System testing. Largely your CI's job, but we play nicely with it. The TotalPath SDKs (Python and Node) let you call our APIs from inside the same pipeline that builds your IVR or your agent prompts, so a system-level deploy can fail before it ever reaches a customer.

Unit testing. Not us. Your dev team. Carry on.

The point of the inverted pyramid isn't that unit tests don't matter. They do. It's that the cost ratio you're used to from web software doesn't hold here. End-to-end tests on a phone line stopped being expensive and slow the moment we built telephony-native infrastructure for them. There's no longer a good reason to have only three of them.

Why the existing tooling doesn't do this

I spent two years leading engineering on a large Amazon Connect implementation. We had complicated dynamic flows, regulated handoffs, a roadmap full of changes the business wanted to ship next quarter. The thing that constantly slowed us down wasn't building. It was proving that what we built still worked.

The incumbent tools in this space were built for a different decade. They expected long-running engagements, fixed test suites, and humans clicking through pre-recorded scripts. They didn't have APIs in any meaningful sense. You couldn't spin them up for an afternoon, run a thousand calls, and switch them off. You certainly couldn't put them in a pipeline.

We lobbied. Nothing changed. So we built Nopaque.

Modern. On-demand. Pay for what you use. SaaS built for speed and scale, not legacy software.

What "real-world assurance" actually buys you

Three things, mostly.

Confidence to ship. When the team in charge of the IVR knows they can run the full regression suite as a real call from London on the actual phone network in seven minutes, the conversation around releases changes. You stop batching up fortnightly drops because you're scared of what you'll break. You ship the small change you wanted to ship.

Compliance you can evidence. If you're in financial services, healthcare or utilities, you already have rules about how the customer journey on the phone is allowed to behave. Disclosures, vulnerable-customer routing, recording notifications. Our compliance test suites give you a repeatable, dated, machine-readable record that the journey did the right thing. The auditor likes a CSV.

Observability for the bit nobody can see. Most contact centre observability stops at the application layer. It tells you the function call ran. It doesn't tell you what the customer actually heard. Our reports do. Latency, ASR confidence, dead air, whether the bot interrupted the caller, whether the handoff actually completed. From the customer's perspective, on the customer's network, on a real phone.

How teams actually adopt this

The honest answer is in stages. Most teams start with a single high-value journey. A payment flow, an authentication step, the bit of the IVR that a regulator cares about. Put that under continuous test. From there it tends to spread. Once one team has dial-tone-to-resolution coverage on a real call, the next team next door wants it too.

The SDKs are designed for that pattern. Anything you can do in our UI you can do programmatically. Build a use case once, run it from a notebook today, run it from your CI tomorrow, run it from a scheduled monitor next week. No bespoke integrations, no professional-services quote, no six-month onboarding. You can be making real calls into your own service inside an afternoon, and the pricing page is published so you don't need to ask us what it costs.

Test earlier. Release with confidence. Stay compliant.

That line at the bottom of the infographic isn't a slogan. It's a rough description of what changes when you put a real customer-perspective test at the top of your SDLC instead of clinging to the old shape.

If you run a contact centre, agentic, traditional, hybrid, and the testing story keeps slowing down everything else you want to do, come and talk to us. Or just register for a free account and try a call.

Clarity matters. Especially on the phone.

Phil Smith is co-founder of Nopaque. Before Nopaque he led engineering on a UK bank's Amazon Connect implementation, which is where most of the bruises in this post came from.

Want us to explore your IVR?

TotalPath runs the same kind of test against your stack. Real audio. Real findings.